Introduction

When an API becomes slow, the first instinct is usually:

“The database must be the bottleneck.”

That assumption is often wrong.

In this investigation, we tested a Spring Boot API with PostgreSQL and discovered that even when the database performs well, the API can still be slow — sometimes over 1 second per request.

The Problem

During load testing, we observed:

- Most requests were very fast

- Some requests were extremely slow (up to ~2 seconds)

This inconsistency made the system unreliable.

System Architecture

To understand where latency might occur, we mapped the request flow:

Client ↓ API Gateway ↓ Spring Boot Controller ↓ Service Layer ↓ Repository (JPA) ↓ PostgreSQL

Each layer was a potential source of delay.

Step 1 — Verify Database Performance

We ran the query directly in PostgreSQL:

EXPLAIN ANALYZE SELECT * FROM transaction_record WHERE account_id = 100;

Result

- Execution time: ~40–50 ms

Conclusion

The database is fast and efficient.

Step 2 — Measure API Performance

We used Apache JMeter to simulate real traffic.

Test Configuration

- 200 concurrent users

- 10 loops

- Dataset: ~200,000 records

- Hardware: Ryzen 5 5600X

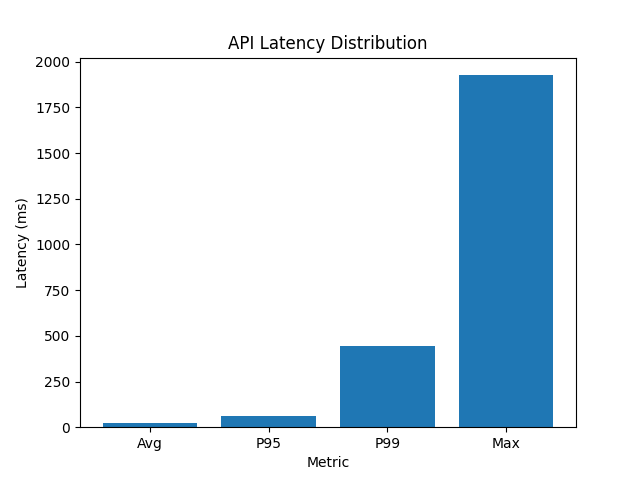

API Performance Metrics

| Metric | Value |

|---|---|

| Average latency | 23 ms |

| Median (P50) | 4 ms |

| P95 latency | 63 ms |

| P99 latency | 444 ms |

| Max latency | 1926 ms |

Latency Analysis

Most requests completed quickly:

- P50: 4 ms

But tail latency was significant:

- P99: 444 ms

- Max: ~2 seconds

This indicates a tail latency problem, where a small percentage of requests are very slow.

Step 3 — Measure Each Layer

We added logging inside the service layer:

long start = System.currentTimeMillis();List<Transaction> data = repository.findByAccountId(id);long dbTime = System.currentTimeMillis();process(data);long end = System.currentTimeMillis();log.info("DB time={} ms", dbTime - start);

log.info("Processing time={} ms", end - dbTime);

log.info("Total time={} ms", end - start);Real Results

Example logs:

DB time = 19 ms Processing time = 1112 ms Total time = 1131 ms

DB time = 24 ms Processing time = 1291 ms Total time = 1315 ms

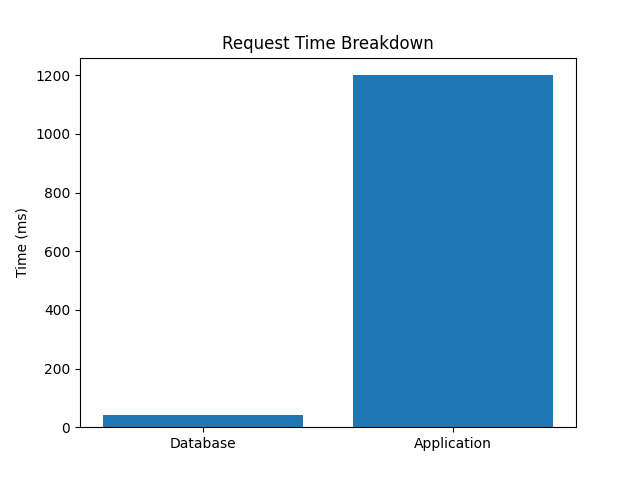

Request Time Breakdown

| Layer | Time |

|---|---|

| Database query | 20–50 ms |

| Application processing | 1100–1500 ms |

| Total | 1200–1600 ms |

Root Cause

The issue was not the database.

It was the application layer.

Example of problematic code:

for (int i = 0; i < 10_000_000; i++) {

Math.sqrt(i * id);

}This simulated real-world problems, such as:

- Heavy data transformation

- Inefficient loops

- Excessive computation

- Serialization overhead

Why Only Some Requests Were Slow

The slowdown was not consistent. This is due to:

1. JVM JIT Compilation

Some requests run optimized code, others do not.

2. CPU Scheduling

Threads compete for CPU under load.

3. Garbage Collection

Occasional pauses increase latency.

Key Insight: Tail Latency Matters

Even if average latency looks good:

- Users experience the slowest requests, not the average

This is why:

P95 and P99 are more important than average latency.

Optimization

We reduced unnecessary processing:

- Removed heavy loops

- Simplified logic

- Optimized transformations

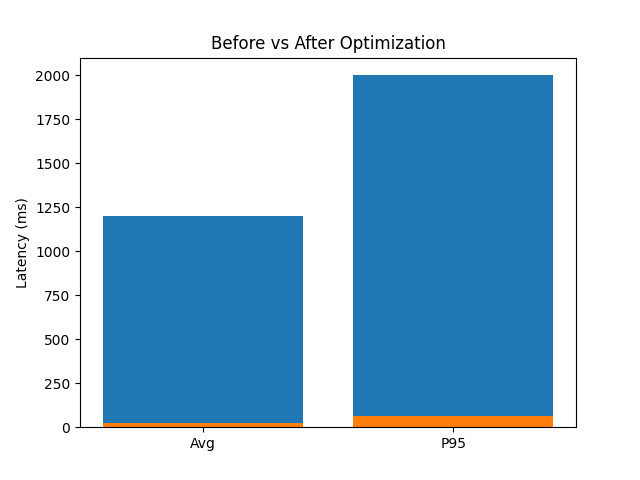

Before vs After

| Metric | Before | After |

|---|---|---|

| Avg latency | ~1200 ms | 23 ms |

| P95 | ~2000 ms | 63 ms |

| DB query | ~40 ms | ~40 ms |

Performance chart

- Latency Distribution: The latency distribution shows a strong tail, with rare spikes reaching ~2 seconds.

- Before vs After: API latency improved significantly after reducing application-layer processing.

- Request Breakdown: Most latency came from application logic, not the database.

Final Conclusion

This experiment demonstrates a key lesson:

A fast database does not guarantee a fast API.

In this case:

- PostgreSQL was performing well

- The real bottleneck was the application logic

Key Takeaways

Application code can be the main bottleneck

Always measure each layer independently

Don’t assume the database is the issue

Focus on P95/P99, not just averages