Introduction

Improving API performance is a common challenge in backend development.

Even with optimized queries and indexing, database calls can still introduce latency — especially under load.

To understand the real impact of caching, I ran a simple experiment:

What happens when Redis is added to a Spring Boot API?

Test Setup

The test was intentionally simple and controlled.

- Backend: Spring Boot

- Database: PostgreSQL

- Cache: Redis (local instance)

- Endpoint tested:

GET /users/{id}- Load testing tool: JMeter

- Same load applied across all scenarios

Test Scenarios

1. No Cache (Baseline)

Every request goes directly to the database.

2. Mixed Load (Realistic Case)

- ~80% cache hits

- ~20% cache misses

This simulates a typical production environment.

3. Cache Hit (Best Case)

- Same request repeated

- Fully served from Redis

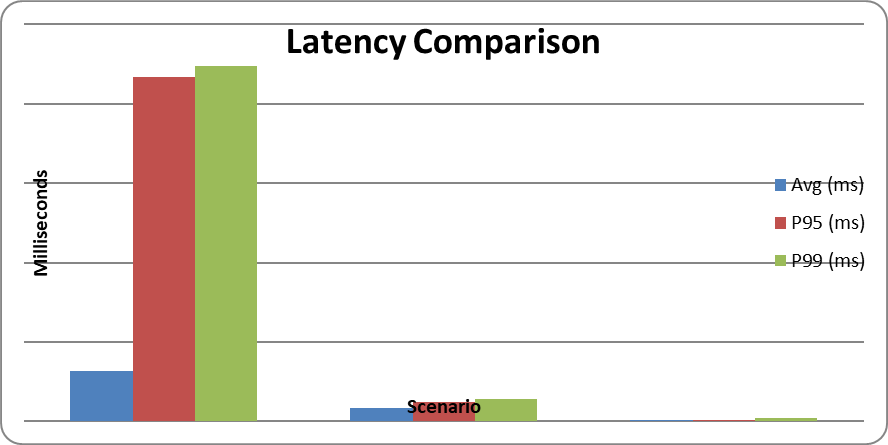

Benchmark Results

| Scenario | Avg (ms) | P95 (ms) | P99 (ms) |

|---|---|---|---|

| No Cache | 31.82 | 217 | 224 |

| Mixed | 8.24 | 12 | 14 |

| Cache Hit | 0.95 | 1 | 2 |

Analysis

Redis Reduced Average Latency by ~30x

The most obvious improvement is in average response time:

- From 31.82 ms → 0.95 ms

This is a significant reduction and clearly shows the benefit of caching.

P95 Latency Improved Even More

Average latency is useful, but P95 tells a more realistic story.

- From 217 ms → 1 ms

This means slow requests were almost completely eliminated.

Mixed Scenario Shows Real Value

In a realistic workload:

- Average latency dropped to 8.24 ms

- About 4x faster than database-only

This is where Redis provides the most practical benefit.

Latency Became Predictable

Without caching:

- Occasional spikes above 200 ms

With Redis:

- Nearly flat response times

This leads to a smoother and more consistent user experience.

Key Insight

Redis does not make your database faster.

It reduces how often your application needs to use the database.

When to Use Redis

Redis is most effective when:

- Data is frequently read

- Data changes infrequently

- Low latency is critical

When Redis Might Not Help

- Highly dynamic or real-time data

- Complex cache invalidation requirements

- Low read frequency endpoints

Limitations of This Test

This experiment used:

- Local Redis (no network latency)

- Simple data model

- Single endpoint

In real-world systems:

- Network overhead may increase latency slightly

- Cache strategy becomes more important

Conclusion

Adding Redis to a Spring Boot API resulted in:

- Up to 30x faster response times

- Significant reduction in tail latency

- More stable and predictable performance

For read-heavy APIs, caching is not just an optimization —it can fundamentally change system behavior.

Final Thought

If your API feels slow, the problem may not be your database.

You might just be calling it too often.