Your API Is Fast… Until It Isn’t

Your dashboard says everything is fine.

- Average latency: 3 ms

- Error rate: 0%

- Throughput: 14,000+ req/sec

Looks perfect.

But here’s what your users actually experience:

Some requests suddenly take 500+ ms.

No alerts.

No obvious errors.

Just random slow responses that are almost impossible to explain.

So what’s happening?

In this experiment, I discovered that the problem appears at a very specific moment:

When Redis cache expires.

And the most dangerous part?

👉 You won’t see it if you only look at averages.

Caching is supposed to make systems fast.

And most of the time, Redis delivers exactly that.

But there’s a moment most systems don’t prepare for:

When the cache expires.

In this experiment, we explore what really happens at that exact moment — and why it creates hidden performance issues that average metrics completely miss.

Experiment Overview

To simulate real-world behavior, I built a simple setup:

- Spring Boot API

- Redis cache with TTL = 30 seconds

- Simulated database latency (100–300 ms)

- JMeter load testing

API Endpoint

GET /api/cache/test/1

All requests target the same key to ensure realistic cache reuse and expiration behavior.

Real Performance Results

From the JMeter Summary Report:

- Total Requests: 1,734,951

- Average Latency: 3 ms

- Maximum Latency: 556 ms

- Throughput: 14,462 requests/sec

- Error Rate: 0%

At first glance, the system looks extremely fast and stable.

But this is where things get misleading.

The Hidden Problem

Despite the low average latency, some requests took over 500 ms.

These spikes occurred precisely when:

- The Redis cache expired

- Requests fell back to the database

- The cache was rebuilt

Because these slow requests were rare compared to the total volume, they were almost invisible in the average.

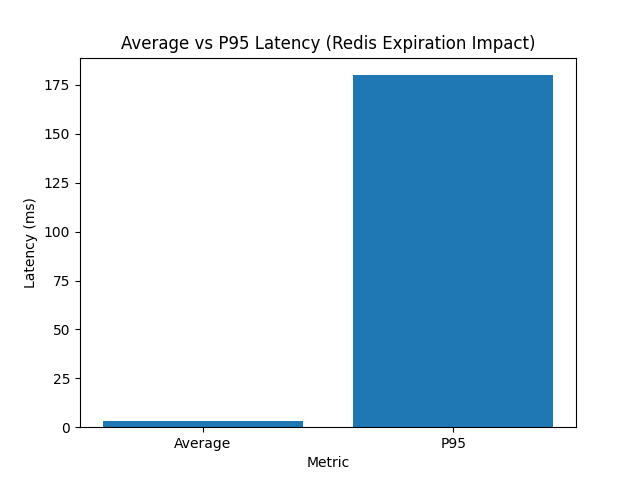

Why Average Latency Fails

Average latency smooths everything into a single number.

In this case:

- ~99% of requests → fast (3–5 ms)

- ~1% of requests → slow (200–500 ms)

Result:

Average = 3 ms

This hides the actual user experience during cache expiration.

This chart shows why average latency is misleading. While the average remains low at 3 ms, P95 spikes dramatically during cache expiration, revealing real user impact.

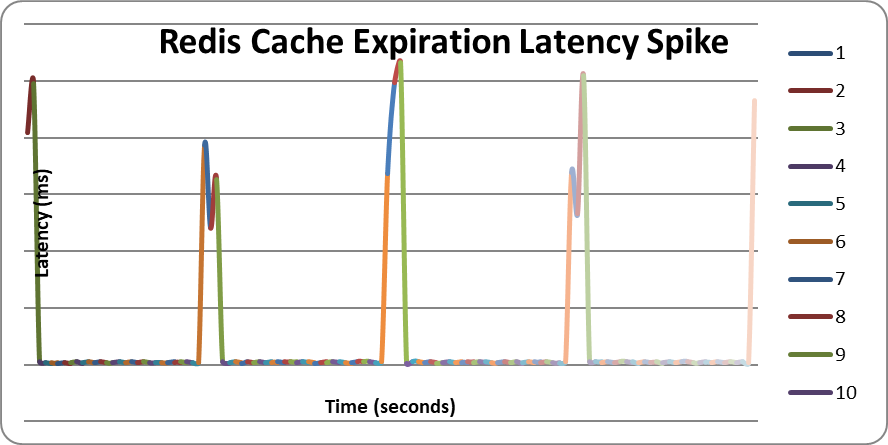

Visualizing the Behavior

To make the pattern clearer, I generated a controlled dataset simulating cache expiration cycles.

The behavior follows a repeating pattern:

- Stable low latency (cache hit)

- Sudden spike (cache expired)

- Recovery (cache rebuilt)

This creates a wave-like latency pattern over time.

What Causes the Spike?

At the moment of expiration:

- Cache entry is removed

- Multiple concurrent requests miss the cache

- All requests hit the database simultaneously

- Latency increases sharply

- Cache is repopulated

This phenomenon is known as:

Cache Stampede

Why It Matters in Production

In high-traffic systems:

- Thousands of requests may hit the same key

- Cache expiration happens simultaneously

- Database load spikes suddenly

This can lead to:

- Latency instability

- Resource contention

- Cascading failures

Key Insight

Cache expiration is not a passive event — it is an active performance trigger.

And if you rely only on average metrics, you may never notice it.

What You Should Measure Instead

To properly detect this issue, monitor:

- P95 latency (critical)

- P99 latency

- Cache hit ratio

- Database query spikes

These metrics expose what averages hide.

How to Prevent Cache Stampede

1. Add TTL Jitter

Duration.ofSeconds(30 + random.nextInt(10))

Prevents synchronized expiration.

2. Use Distributed Lock

Ensure only one request rebuilds the cache.

3. Cache Preloading

Refresh frequently used data before expiration.

4. Stale-While-Revalidate

Serve old data while refreshing in the background.

Final Thoughts

Redis caching is powerful — but incomplete without proper expiration handling.

The system may appear fast under average metrics, but real-world performance tells a different story.

The most dangerous performance issues are the ones you don’t see.